Projets

Équipe : ALMASTY

Résistance des systèmes cryptographiques

Le projet Cryptanalyse s’intéresse à l’étude et à la standardisation des primitives cryptographiques. En effet la cryptographie moderne est devenue un outil indispensable pour sécuriser les communications personnelles, commerciales et institutionnelles. Ce projet permettra de fournir, une estimation des difficultés de résoudre les problèmes sous-jacents et d’en déduire le niveau de sécurité que confère l’utilisation de ces primitives.

La problématique est l’évaluation de la sécurité des algorithmes cryptographique.

Project Leader : Charles Bouillaguet

01/10/2023

Tailles de clés : optimisations pratiques et théoriques et approches modernes pour des estimations précises du coût de NFS

Project Leader : Charles Bouillaguet

01/10/2021

Calcul réparti sécurisé : Cryptographie, Combinatoire, Calcul Formel

Project Leader : Damien Vergnaud

10/09/2021

Équipe : ALSOC

Vérification formelle et résilience aux attaques physiques de contre-mesures matérielles

Project Leader : Emmanuelle Encrenaz

08/04/2025

Modélisation et Vérification pour CPS Sécurisés et Performants

Project Leader : Daniela Genius

01/10/2023

Conception de systèmes sécurisés par une réduction des effets de la micro-architecture sur les attaques par canaux auxiliaires

Project Leader : Quentin Meunier

01/10/2020

TSAR - TSAR (Tera-Scale ARchitecture)

Project Leader : Alain Greiner

27/09/2017

Équipe : APR

Analyse de paramètres de classes de DAGS

Project Leader : Antoine Genitrini

01/10/2023

Leveraging Software Heritage to Enhance Cybersecurity

Project Leader : Antoine Mine

01/10/2023

Raisonnements formellement certifiés en apprentissage automatique

Project Leader : Antoine Mine

01/10/2023

Improving Digital Systems Security Evaluation

Project Leader : Antoine Mine

01/07/2022

TORI - In-situ Topological Reduction of Scientific 3D Data

TORI (In-situ Topological Reduction of Scientific 3D Data) is an ERC Consolidator research project started in October 2020 and coordinated by Julien Tierny. It aims at addressing the explosion in size and complexity of large-scale data by developing the next generation data reduction tools based on topological data analysis.

Project Leader : Julien Tierny

01/10/2020

Équipe : BD

Enrichissement d'une base de connaissances à partir de données prosopographiques médiévales incertaines

Project Leader : Camelia Constantin

01/11/2025

https://scanr.enseignementsup-recherche.gouv.fr/projects/ANR-25-CE38-0700

experimaestro - Planification et gestion d'expériences informatiques

Experimaestro is an experiment manager based on a server that contains a job scheduler (job dependencies, locking mechanisms) and a framework to describe the experiments with JavaScript or in Java.

Project Leader : Benajmin PIWOWARSKI

01/01/2016

SPARQL on Spark - SPARQL query processing with Apache Spark

A common way to achieve scalability for processing SPARQL queries over large RDF data sets is to choose map-reduce frameworks like Hadoop or Spark. Processing complex SPARQL queries generating large join plans over distributed data partitions is a major challenge in these shared nothing architectures. In this article we are particularly interested in two representative distributed join algorithms, partitioned join and broadcast join, which are deployed in map-reduce frameworks for the evaluation of complex distributed graph pattern join plans. We compare five SPARQL graph pattern evaluation implementations on top of Apache Spark to illustrate the importance of cautiously choosing the physical data storage layer and of the possibility to use both join algorithms to take account of the existing predefined data partitionings. Our experimentations with different SPARQL benchmarks over real-world and synthetic workloads emphasize that hybrid join plans introduce more flexibility and often can achieve better performance than join plans using a single kind of join implementation.

Project Leader : Hubert NAACKE

01/01/2015

http://www-bd.lip6.fr/wiki/en/site/recherche/logiciels/sparqlwithspark

BOM - Block-o-Matic!

Block-o-Matic est un algorithme de segmentation de pages Web basé sur une approche hybride pour la segmentation de documents numérisés et la segmentation de contenu à base visuelle. Une page Web est associée à trois structures: l'arborescence DOM, la structure de contenu et la structure logique. L'arborescence DOM représente les éléments HTML d'une page, la structure géométrique organise le contenu en fonction d'une catégorie et de sa géométrie et enfin la structure logique est le résultat de la cartographie de la structure du contenu sur la base du sens humain. Le processus de segmentation est divisé en trois phases: l'analyse, la compréhension et la reconstruction d'une page Web. Une méthode d'évaluation est proposée afin d'effectuer l'évaluation des segmentations de pages Web sur la base d'une vérité de terrain de 400 pages classées en 16 catégories. Un ensemble de mesures est présenté en fonction des propriétés géométriques des blocs. Des résultats satisfaisants sont obtenus en comparaison avec d'autres algorithmes suivant la même approche.

Project Leader : Andrès SANOJA

01/01/2012

Équipe : CIAN

ARCHitectures based on unconventional accelerators for dependable/energY efficienT AI Systems

Project Leader : Haralampos Stratigopoulos

01/12/2024

Systèmes Bio-inspirés distribués de confiance : bases théoriques et mise en œuvre matérielle

Project Leader : Haralampos Stratigopoulos

01/10/2023

Trusted SMEs for Sustainable Growth of Europeans Economical Backbone to Strengthen the Digital Sovereignty

Project Leader : Haralampos Stratigopoulos

01/10/2023

A network of excellence for distributed, trustworthy, efficient and scalable AI at the Edge

Project Leader : Haralampos Stratigopoulos

01/09/2023

Compréhension et atténuation d’erreur dans les implémentations analogiques de réseaux de neurones sur silicium

Project Leader : Haralampos Stratigopoulos

01/10/2022

Récupération d'énergie mécanique proche des limites physiques par synthèse adiabatique de la dynamique électromécanique

C23/0800

Project Leader : Dimitri Galayko

01/10/2022

Architectures matérielles fiables pour l'Intelligence Artificielle de confiance

Project Leader : Haralampos Stratigopoulos

25/01/2022

CORIOLIS - Plate-forme pour la synthèse physique de circuits intégrés

Coriolis est une plate-forme logicielle pour la recherche d'algorithmes, le développement d'outils et l'évaluation de nouveaux flots de conception physique VLSI. Les procédés technologiques actuels, nanométriques, posent de nouveaux défis aux flots de CAO. Les recherches académiques concernent souvent la résolution de problèmes trop spécifiques, indépendemment d'autres algorithmes, faute de pouvoir inter-opérer avec eux. Or il est capital de pouvoir évaluer les interactions entre les différents outils au sein d'un flot complet de conception. La plate-frome CORIOLIS, conçue en C++, est faite pour permettre l'inter-opérabilité des différents briques logicielles qui l'utilisent. Elle propose actuellement dessolutions aux problèmes de partitionnement, de placement et routage de circuits numériques, en technologie nanométrique.

Project Leader : Jean-Paul CHAPUT

01/01/2004

CAIRO - Circuits Analogiques Intégrés Réutilisables et Optimisés

L'objectif du projet CAIRO est de développer des méthodes et des outils autorisant une réutilisation des cellules analogiques et une capitalisation de connaissances du concepteur sous forme des cellules IP (Intellectual Property) portables d’une technologie à l'autre et d’un jeu de spécifications à l'autre. Le langage CAIRO+, ensemble de fonctions C++, est un langage de création d’IP analogiques permettant de structurer, de formaliser et d’automatiser en grande partie le flot de conception analogique. Il est utilisé pour créer une procédure appelée «générateur» pour une cellule analogique. A l'étape actuelle d'avancement du projet, la structure électrique de la cellule (i.e. le schéma électrique non dimensionné) est figée par le concepteur. Le générateur doit permettre un dimensionnement des composants de la cellule (définition de la taille des transistors, des capacités etc.) et de synthétiser le layout – le tout en fonction des spécifications de la cellule et des paramètres technologiques. L'écriture du générateur de la cellule est à la charge du concepteur, notamment, la partie qui concerne le dimensionnement électrique du circuit. Un des points forts du langage CAIRO+ est, sans doute, la possibilité de synthétiser le layout d'une manière quasi-automatique, à partir du schéma électrique dimensionné – la fonction de génération du layout fait partie des modules «natifs» du langage. De plus, le dimensionnement électrique peut prendre en compte les éléments parasites du layout (nous disons «peut», car tout dépend de la volonté du concepteur qui définit la procédure de dimensionnement). Dans ce cas, plusieurs cycles «dimensionnement de la cellule – synthèse du layout» peuvent être nécessaires. Un des pôles d'intérêt de ce groupe est la conception de modulateurs sigma-delta temps continu. Dans cette activité nous nous attachons à capitaliser l’effort de conception en développant des méthodes et des outils permettant une réutilisation des résultats. La structure complexe des modulateurs, incluant un grand nombre de cellules de fonctionnalité identique mais de spécifications différentes (telles que GmC, amplificateurs), offre un contexte approprié pour l’application de la méthodologie implémentée dans CAIRO+.

Project Leader : Marie-Minerve LOUËRAT

01/01/2004

Équipe : DECISION

Algorithmes pour la prise de décision et l'apprentissage des préférences en optimisation multi-objectifs

Project Leader : Nawal Benabbou

01/10/2024

aGrUM - a Graphical Unified Model

aGrUM est une librairie en C++ de manipulation de modèles graphiques. Son spectre est assez large puisqu'elle est conçue pour faire de l'apprentissage (de réseaux bayésiens par exemple), de la planification (FMPDs) ou bien encore de l'inférence (réseaux bayésiens, GAI, diagrammes d'influence).

Project Leader : Christophe GONZALES & Pierre-Henri WUILLEMIN

Équipe : DELYS

Algorithmes distribués frugaux au coeur des réseaux

Project Leader : Pierre Sens

01/10/2024

Vers des applications serverless correctes par construction

Project Leader : pierre sens

01/10/2024

https://scanr.enseignementsup-recherche.gouv.fr/projects/ANR-24-CE25-5598

Désagrégation virtualisée

Project Leader : Julien Sopena

01/09/2023

Un nouveau paradigme de donnée : Les données autonomes et intelligentes

Project Leader : Franck Petit

01/10/2022

Équipe : LFI

Pelvic neRves autOmatic Segmentation using hybrId Trustworthy AI

.

Project Leader : Isabelle Bloch

01/10/2025

Exploitation de modèles d'explications pour les algorithmes d'apprentissage profond

Project Leader : Christophe Marsala

01/10/2024

Histoire des agences d'images et vision par ordinateur

Project Leader : Isabelle Bloch

01/10/2024

Méthodes Avancées pour l'Assistance à la Gastro-endoscopie Interventionnelle Endoscopique

Project Leader : Isabelle Bloch

01/01/2024

Apprentissage de mesure de similarité pour le transfert analogique

Project Leader : Marie-Jeanne Lesot

01/10/2022

Premature Human Connectome Patterns: mapping the fetal brain development using extreme field MRI

Project Leader : Isabelle Bloch

01/10/2021

Équipe : MOCAH

PostGenAI - PAC 3.3

Le projet présenté poursuit deux objectifs principaux :

Développer des modèles d’apprentissage adaptatifs réutilisables et flexibles, capables de s’adapter à différents profils d’apprenants et systèmes, en s’appuyant sur une approche post-IA générative.

Accompagner l’intégration de l’IA générative en éducation à travers la formation, le partage de méthodes et des recommandations, afin d’évaluer son rôle dans la transformation et l’industrialisation de la formation.

L’IA générative permet également de remplacer certaines tâches peu créatives, libérant du temps pour les enseignants et favorisant leur créativité.

La recherche repose sur une démarche participative pour concevoir les systèmes adaptatifs, et sur l’observation des processus industriels pour analyser l’impact de l’IA générative. Enfin, plusieurs initiatives existent déjà dans ce domaine, notamment au laboratoire LIP6, qui a contribué au partage de données et de modèles éducatifs.

Project Leader : Vanda Luengo

01/01/2025

IA pour la personnalisation de rétroactions dans l'apprentissage de la pensée informatique par le jeu

La spasticité est un trouble moteur caractérisé par une hyperactivité musculaire provoqué par l’alétration de la conduction nerveuse. Le diagnostic de cette pathologie repose sur l’évaluation du degré de résistance du membre suite à un mouvement passif réalisé par le praticien et sert à déterminer le traitement à suivre. Cependant cette évaluation reste subjective et requiert de l’expérience de la pratique. Seul un entraînement sur patient réel permet de gagner de l’expérience. C’est dans ce contexte que le projet HASPA a pour but de développer un simulateur permettant de reproduire différents degrés de spasticité pour permettre aux jeunes praticiens de s’exercer avant de pratiquer leurs gestes sur patient.

Project Leader : Sebastien Lalle

Partenaires : Le consortium réunit dans ce projet pluridisciplinaire est composé de 6 laboratoires publics (AMPERE, CEA-List, CRNL, LBMC, LIP6 SYMME) et va chercher à réaliser un simulateur haptique proposant une formation adéquate et pertinente pour les futurs praticiens.

01/10/2024

Simulateur haptique pour l'apprentissage de la spasticité

La spasticité est un trouble moteur caractérisé par une hyperactivité musculaire provoqué par l’alétration de la conduction nerveuse. Le diagnostic de cette pathologie repose sur l’évaluation du degré de résistance du membre suite à un mouvement passif réalisé par le praticien et sert à déterminer le traitement à suivre. Cependant cette évaluation reste subjective et requiert de l’expérience de la pratique. Seul un entraînement sur patient réel permet de gagner de l’expérience. C’est dans ce contexte que le projet HASPA a pour but de développer un simulateur permettant de reproduire différents degrés de spasticité pour permettre aux jeunes praticiens de s’exercer avant de pratiquer leurs gestes sur patient.

Project Leader : Vanda Luengo

Partenaires : Le consortium réunit dans ce projet pluridisciplinaire est composé de 6 laboratoires publics (AMPERE, CEA-List, CRNL, LBMC, LIP6 SYMME) et va chercher à réaliser un simulateur haptique proposant une formation adéquate et pertinente pour les futurs praticiens.

01/10/2022

Adaptiv’Math - Adaptiv’Math

obtenu dans le cadre du Partenariat d'Innovation Intelligence Artificielle (P2IA) du ministère de l'éducation nationale et porté par la startup EvidenceB, implique des entreprises (Nathan, Daesign, Schoolab, Isograd, BlueFrog), deux laboratoires (LIP6 et Inria Bordeaux), l'APMEP (association des professeurs de mathématiques) ainsi que des chercheurs en psychologie cognitive (E. Sander) et en neurosciences (A. Knopf). Il vise à réaliser un assistant pédagogique pour les mathématiques du Cycle 2 (CP, CE1, CE2) s'appuyant sur des algorithmes d'IA et sur un ensemble d'exercices définis à partir d'avancées en sciences cognitives.

Nous travaillons sur une brique IA visant à proposer des regroupements d'élèves (textit{clustering}) appris sur l'ensemble des classes sur la base de critères de maîtrise de compétences en mathématiques. Ce textit{clustering} est ensuite appliqué classe par classe à intervalles réguliers pour proposer à l'enseignant un suivi de l'évolution de ses groupes d'élèves, afin de faciliter la mise en place de stratégies de pédagogie différenciée.

Project Leader : François Bouchet

01/10/2019

MAGAM - Multi-Aspect Generic Adaptation Model

MAGAM est un modèle générique basé sur un calcul matriciel permettant d'adapter des activités d'apprentissage selon plusieurs aspects (pédagogique, didactique, ludique, motivationnel, etc.).

Project Leader : Vanda LUENGO et Baptiste MONTERRAT

01/03/2016

LEA4PA - LEarning Analytics for Adaptation and Personnalisation

Le projetLEarning Analytics for Personalization and Adaptation a pour objectif de proposer, à destination des décideurs (ensei-gnants, apprenants, ..), des algorithmes et des visualisations permettant des analyses du com-portement de l’étudiant pour l’adaptation et la remédiation. Il s’applique à plusieurs niveaux d’enseignement. Pour le niveau collège, la recherche est menée dans le cadre d’une collaboration soutenue par la direction du numérique pour l’éducation (MEN). Dans ce contexte, nous proposons des analyses (descriptives et diagnostiques) des compétences des apprenants en algèbre, ainsi que des visualisations, l’objectif étant d’assister l’enseignant dans l’adaptation des activités[4].Pour l’enseignement supérieur, la recherche est menée en s’appuyant sur la plateforme LA-PAD développée par CAPSULE (centre d’innovation pédagogique de Sorbonne Université).Dans ce contexte, nous nous intéressons à comprendre les parcours des apprenants à partir de techniques d’analyse séquentielle et de règles d’association.

Project Leader : Vanda LUENGO et Amel YESSAD

01/01/2016

Équipe : MoVe

Réduire l'empreinte énergétique des logiciels grâce aux changements de comportement des utilisateurs

Project Leader : Adel Noureddine

01/09/2025

BCMCyPhy - Modèle à composants pour les systèmes de contrôle cyber-physiques

Ce projet de recherche et le logiciel associé visent à concevoir et implanter un modèle de programmation par composants pour les systèmes de contrôle cyber-physique. Il se greffe sur le projet BCM4Java dont il utilise les concepts de base et l'implantation des composants répartis en Java (10.000 lignes de code et de documentation à ce jour). Outre le fait d'intégrer des composants temps réel, ce projet étudie les architectures de contrôle (automatique) à base de composants, leur spécification utilisant les systèmes hybrides stochastiques et leur simulation utilisation des modèles de simulation suivant la norme DEVS. Une attention particulière est portée sur la composabilité parallèle entre les composants, leurs spécifications et leurs modèles de simulation individuels. Un sous-projet logiciel de BCMCyPhy propose une nouvelle implantation en Java de la norme DEVS pour la simulation modulaire des composants et de leurs assemblages (20.000 lignes de code et de documentation à ce jour)). Les simulateurs obtenus par composition entre simulateurs de chaque composant permettent ensuite de mettre au point, tester, vérifier et valider les applications. Ce logiciel a été utilisé par quelques dizaines d'étudiants et l'est toujours dans le cadre du cours de master 2 ALASCA depuis 2018.

Project Leader : Jacques MALENFANT

18/06/2019

PNML Framework

PNML Framework est l'implémentation prototype du standard ISO/IEC-15909 (partie 2), le format d'échange normalisé pour les réseaux de Petri. L'objectif principal de PNML est d'aboutir à l'interopérabilité des outils basés sur les réseaux de Petri. PNML Framework est conçue pour implémenter le standard au fur et à mesure de son élaboration, afin d'en mesurer la faisabilité et de servir de référecne pour es outils de la communauté. Il propose une API de manipulation permettant de créer, sauver, charger et parcourir des réseaux de Petri au format PNML.

Project Leader : Fabrice KORDON

01/04/2005

CPN-AMI

CPN-AMI est un environnement conçu sur FrameKit: une plate-forme logicielle d'intégration permettant un couplage rapide d'outils de provenance diverses. CPN-AMi est ainsi l'assemblage le plus complet d'outils de vérifications à partir de réseaux de Petri. Ces outils ont été développés dans le cadre des travaux de SRC ou par des partenaires universitaires (Université de Turin, Université d'Helsinki, Bell-Labs, Université de Munich, Université Humbolt à Berlin). CPN-AMI est composé d'un serveur d'outils et d'une interface utilisateur déporté à laquelle on se connecte.

Project Leader : Fabrice KORDON

01/12/1994

SPOT - Spot Produces Our Traces

SPOT (Spot Produces Our Traces) est une bibliothèque de model-checking facilement extensible. À la différence des model-checkers existants, dont le mode opératoire est immuable, SPOT fournit des briques que l'utilisateur peut combiner entre elles pour réaliser un model-checker répondant à ses propres besoins. Une telle modularité permet d'expérimenter facilement différentes combinaisons, et facilite le développement de nouveaux algorithmes. D'autre part, cette bibliothèque est centrée autour d'un type d'automates particulier permettant d'exprimer les propriétés à vérifier de façon plus compacte, qui n'a jamais été utilisé dans un outil jusqu'à présent.

Project Leader : Denis POITRENAUD

Équipe : NPA

Towards a comprehensive pan-African research infrastructure in Digital Sciences

Project Leader : Serge Fdida

01/01/2025

Repousser les Limites Usuelles Concernant l'Avenir des Réseaux Ubiquitaires

Project Leader : Sebastien Tixeuil

01/10/2024

End-to-end Cybersecurity to NEMO meta-OS

Project Leader :

02/09/2024

Greener Future Digital Research Infrastructures

Project Leader : Serge Fdida

01/03/2024

SUstainable federation of Research Infrastructures for Scaling-up Experimentation in 6G

Project Leader : Serge Fdida

01/01/2024

Telecommunications and Computer Vision Convergence Tools for Research Infrastructures

Project Leader : Serge Fdida

01/02/2023

Sécurité cognitive et programmable pour la résilience des réseaux de nouvelle génération

Sécurité cognitive et programmable pour la résilience des réseaux de nouvelle génération

Project Leader : Sebastien Tixeuil

01/10/2020

F-Interop - Services de tests d'interopérabilité à distance pour les objets connectés (IoT)

Services de tests d'interopérabilité à distance pour les objets connectés (IoT)

Project Leader : Serge FDIDA

01/01/2016

Équipe : PEQUAN

Floating-Point Transformer 4

Ce projet a pour objectif d’utiliser les grands modèles de langage pour aider à l’analyse et la transformation automatique de code flottant.

Project Leader : fabienne jezequel

01/10/2024

Algorithmes en précision mixte pour le calcul haute performance

Project Leader : Theo Mary

01/10/2023

Methods and Algorithms for Exascale

Project Leader : Pierre Jolivet

01/10/2023

https://numpex.org/exama-methods-and-algorithms-for-exascale/

Architectures Novatrices pour Capteur Fibre Optique Acoustique Distribué

Project Leader : Fabienne Jezequel

01/10/2023

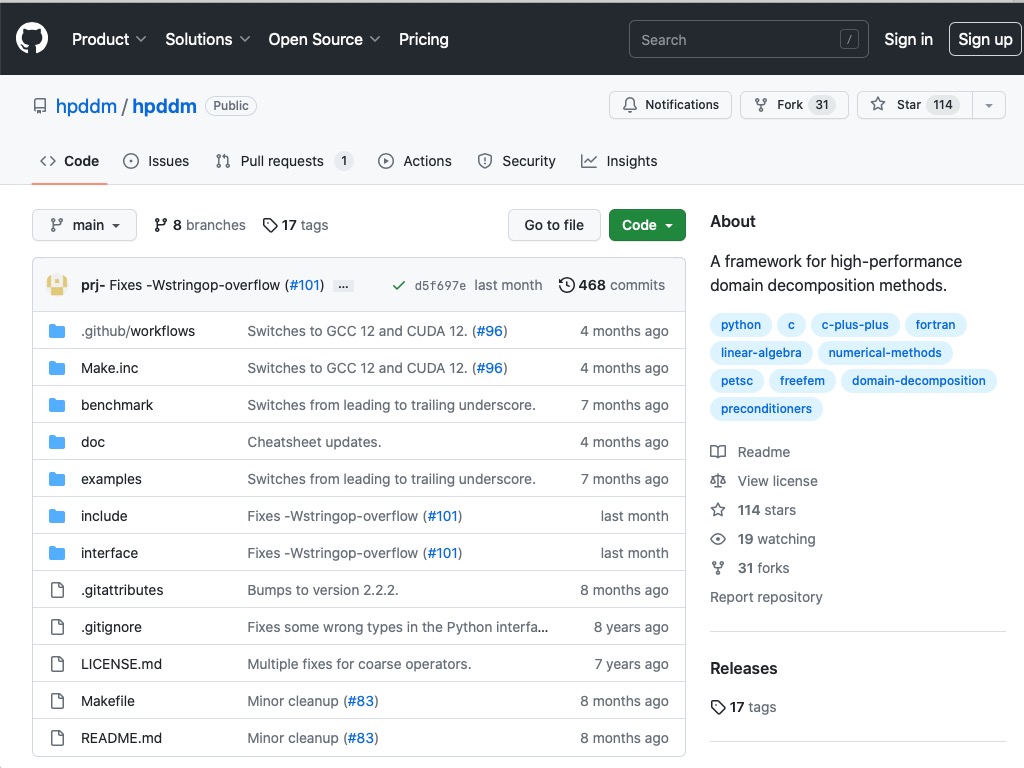

HPDDM - high-performance unified framework for domain decomposition methods

HPDDM est une collection de préconditionneurs basés sur le paradigme de la décomposition de domaine, avec ou sans recouvrement. Ils peuvent être utilisés pour résoudre de grands systèmes linéaires, comme on en rencontre généralement lors de la discrétisation d'équations aux dérivées partielles. Ces préconditionneurs peuvent être utilisés avec diverses méthodes Krylov. La bibliothèque est utilisable dans les codes C, C++, Python ou Fortran.

Project Leader : Pierre JOLIVET

01/12/2022

Un jumeau numérique mécanique assisté par les splines et basé sur les images pour l'analyse de structures lattices réelles

Project Leader : Pierre Jolivet

01/10/2022

FiXiF - Reliable fixed-point implementation of linear signal processing (and control) algorithms

FiXiF est une suite d’outils utilisés pour implémenter des filters sur des systèmes embarqués (tels que DSP, micro-controlleurs, FPGA ou ASIC) avec l’impact de la précision finie (virgule fixe et flottante).

Project Leader : Thibault HILAIRE

01/08/2017

PROMISE - PRecision OptiMISE

PROMISE est un logiciel permettant de déterminer automatiquement la précision adéquate des variables dans un code numérique.

Project Leader : Fabienne JEZEQUEL

01/01/2016

ExBLAS - Exact Basic Linear Algebra Subprograms

ExBLAS fournit une version performante des algorithmes fondamentaux d'algèbre linéaire dont les résultats sont précis et reproductibles.

Project Leader : Stef GRAILLAT

01/01/2014

SAM - Stochastic Arithmetic in Multiprecision

SAM est une bibliothèque qui permet d'estimer et de contrôler la propagation des erreurs d'arrondi dans les programmes en précision arbitraire.

Project Leader : Fabienne JEZEQUEL

01/01/2010

CADNA - Control of Accuracy and Debugging for Numerical Application

CADNA est une bibliothèque qui permet de faire du calcul scientifique sur ordinateur en estimant et contrôlant la propagation des erreurs d'arrondi

Project Leader : Fabienne JEZEQUEL

10/01/1992

Équipe : PolSys

Post-Quantum Multivariate Cryptography

Le projet PQMC – Cryptographie Post-Quantique Multivariée vise à étudier, concevoir et implémenter de nouveaux schémas cryptographiques fondés sur des problèmes multivariés, dans le cadre de la transition vers des standards résistants à l’informatique quantique. Porté par le CNRS (coordinateur), le projet réunit sept partenaires académiques et industriels, dont le LIP6 (Sorbonne Université), qui se concentre sur les aspects algorithmiques, la sécurité asymptotique et les estimations de complexité des attaques. L’objectif est d’identifier des primitives cryptographiques robustes, efficaces et standardisables, notamment dans le contexte du processus de normalisation post-quantique engagé par le NIST. Le financement ANR alloué à Sorbonne Université pour le LIP6 s’élève à 217 494,38 €, couvrant principalement du personnel non permanent, du matériel scientifique et du fonctionnement. Durée du projet : 48 mois, à compter du 1er octobre 2025.

Project Leader : Mohab Safey

01/10/2025

Calcul Rapide de Relations Algébriques Multivariées

Project Leader : Vincent Neiger

01/10/2023

Algorithmes Efficaces pour Guessing, Inégalités, Sommation

Project Leader : Jeremy Berthomieu

01/10/2022

Équipe : QI

Quantum Internet Alliance - Phase 2

Project Leader :

01/01/2026

Quantum Competitiveness Alignment, Scaling, and Support

Project Leader :

01/01/2026

Opérations quantiques d'ordre supérieur avec états connus

.

Project Leader : Marco Quintino

01/10/2025

Designing, Managing and Debugging Quantum Networks

Project Leader :

01/01/2025

Réseaux de capteurs quantique

Project Leader : damian markham

01/10/2024

Module de sécurité matériel pour le calcul dans un cloud quantique sécurisé

Project Leader : Elham Kashefi

01/01/2024

Un réseau quantique de capteurs distribués

Project Leader : Eleni Diamanti

01/10/2023

Ordinateurs quantique à base de lumière en variables discrètes et continues

Project Leader : Frederic Grosshans

01/10/2023

Scalable Continuous Variable Cluster State Quantum Technologies

Project Leader : Damian Markham

01/11/2022

Near term quantum devices: complexity, verification and applications

C22/1651

Project Leader : Alex Bredariol-Grilo

01/10/2022

Quantum technologies: Education and training to fulfill the strategic skill needs of research and industry in France

Project Leader : Eleni Diamanti

01/09/2022

Quantum communication testbeds

Project Leader : Eleni Diamanti

01/07/2022

Distribution quantique de clés avec des boîtes noires

Project Leader : Damian Markham

01/07/2022

Initiative Nationale Hybride HPC Quantique – R&D et Support des communautés

Project Leader : Damian Markham

01/04/2022

From NISQ to LSQ: bosonic corrector codes and LDPC

Project Leader : Frederic Grosshans

01/01/2022

Etude de la Pile Quantique : Algorithmes, modèles de calcul et simulation pour l’informatique quantique

Project Leader : Damian Markham

01/01/2022

High Performance Computer – Quantum Simulator hybrid

Project Leader : Elham Kashefi

01/12/2021

Initiative Nationale Hybride HPC Quantique - Acquisition

Project Leader : Elham Kashefi

24/11/2021

Équipe : RO

DynaBBO - Dynamic Selection and Configuration of Black-box Optimization Algorithms

DynaBBO (Dynamic Selection and Configuration of Black-box Optimization Algorithms) is an ERC Consolidator research project started in October 2024 and coordinated by Carola Doerr. It aims at improving black-box optimisation technics; technics which are heavily relied-on by the industry sector and based on the repetition of experiments or numerical simulations to evaluate potential solutions to a problem.

Project Leader : carola doerr

01/10/2024

Algorithmes avec prédictions

Project Leader : Spyros Angelopoulos

01/10/2023

Bridging Black-box Optimization and Machine Learning for Dynamic Algorithm Configuration

Project Leader : Carola Doerr

01/10/2023

THéorie et observation Empirique pour Mesurer l’Influence dans les Structures sociales

In the literature of cooperative games, the notion of power index has been widely used to evaluate the inuence" of individual players (e.g., voters, political parties, nations, etc.) involved in a collective decision process, i.e. their ability to force a decision in situations like an electoral system, parliament, a governing council, a management board, etc. In practical situations, however, the information concerning the strength of coalitions and their e ective possibilities of cooperation is not easily accessible due to heterogeneous and hardly quanti able factors about the performance of groups, their bargaining abilities, moral and ethical codes and other psychological" attributes (e.g., the power obtained by threatening not to cooperate with other players). So, any attempt to numerically represent the inuence of groups and individuals conicts with the complex and multi-attribute qualitative nature of the problem. Previous applications of cooperative games show that this type of qualitative information is central for the evaluation of the individual inuence in voting systems and in social networks, the degree of acceptability of arguments in a debate, or the importance of criteria in a multi-criteria decision-making process, etc.

Project Leader : Fanny Pascual

Partenaires : université Paris Dauphine CNRS Hauts de France

01/10/2020

Équipe : SMA

Trustworthy AI for the Written Press

Project Leader : Gauvain Bourgne

01/01/2025

Applications et implications de l'intelligence artificielle dans la science

Project Leader : Jean-Gabriel Ganascia

01/01/2024

PEPR Ensemble - Gérer à l’échelle les collectifs de production de connaissance

Project Leader : Nicolas Maudet

01/09/2023

https://www.pepr-ensemble.fr/congrats-collaboration-a-grande-echelle/

Une plateforme argumentative pour la démocratie

Project Leader : Nicolas Maudet

01/01/2023

Équipe : SYEL

Architectures adaptatives pour l’intelligence artificielle embarquée

Project Leader : Andrea Pinna

01/10/2023

Intelligence artificielle embarquée et Capsules Ingérables (LabCom BodyCAP)

Project Leader : Andrea Pinna

01/01/2022